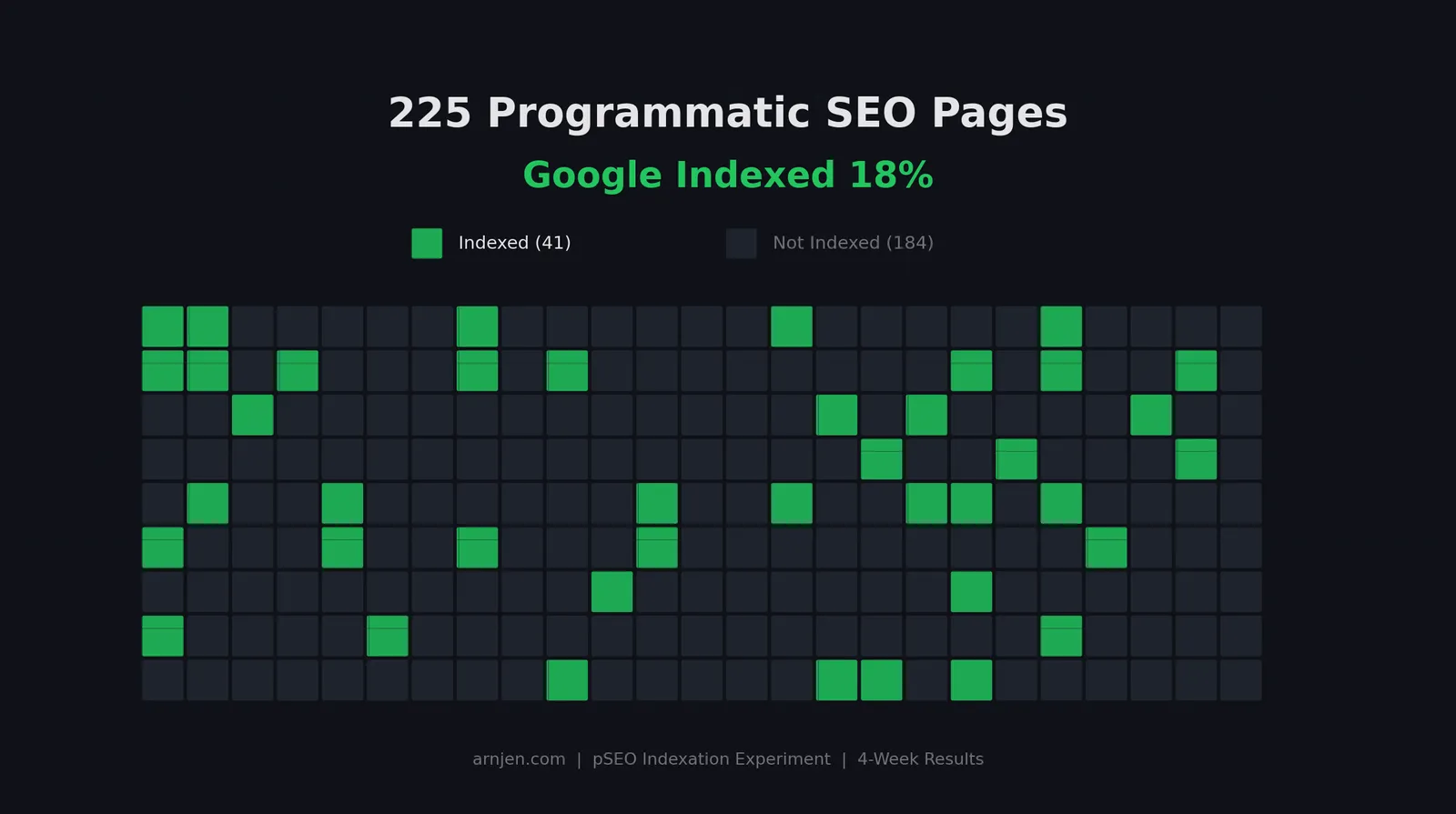

I Built 225 Programmatic SEO Pages, Google Indexed 18%. Here's the Data.

Four weeks ago, I launched 225 programmatic SEO pages and waited to see what Google would do with them. The answer: it indexed 18% and ignored the rest.

A few weeks ago I published the full setup for this experiment. You can read the background in Rebooting Arnjen.com With a Programmatic SEO Indexation Pace Experiment. That post covered the why, the build, and the early indexation checkpoints. This post is purely about the data.

I'm going to walk through exactly what happened across four weeks of Google Search Console data, what patterns emerged, what failed, and what I'm changing. No fluff, just numbers and analysis.

Quick Recap

For anyone landing on this cold: I built arnjen.com as a programmatic SEO experiment. 225 earnings pages across 15 business models and 15 niches, each targeting "how much do [niche] [business model] make" style queries. Every page follows the same 8-section template structure. On top of those, there are 15 business model hub pages, 15 niche hub pages, 2 blog posts, and 5 static pages. The full setup is in the original post.

Now let's talk about what actually happened.

The Data: 4 Weeks In

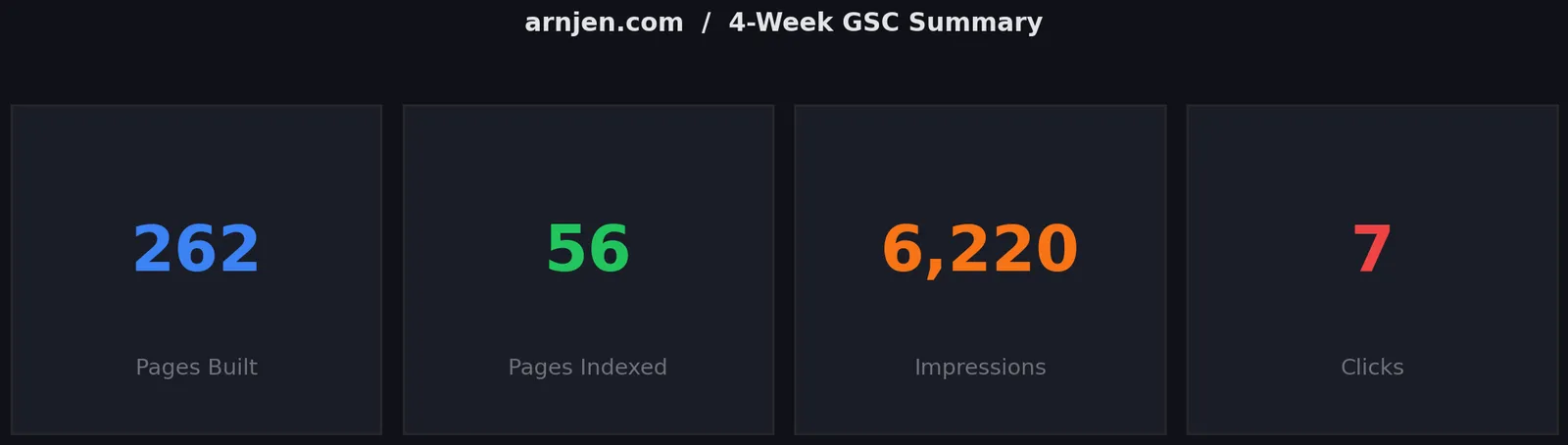

Here's the top-level picture from Google Search Console:

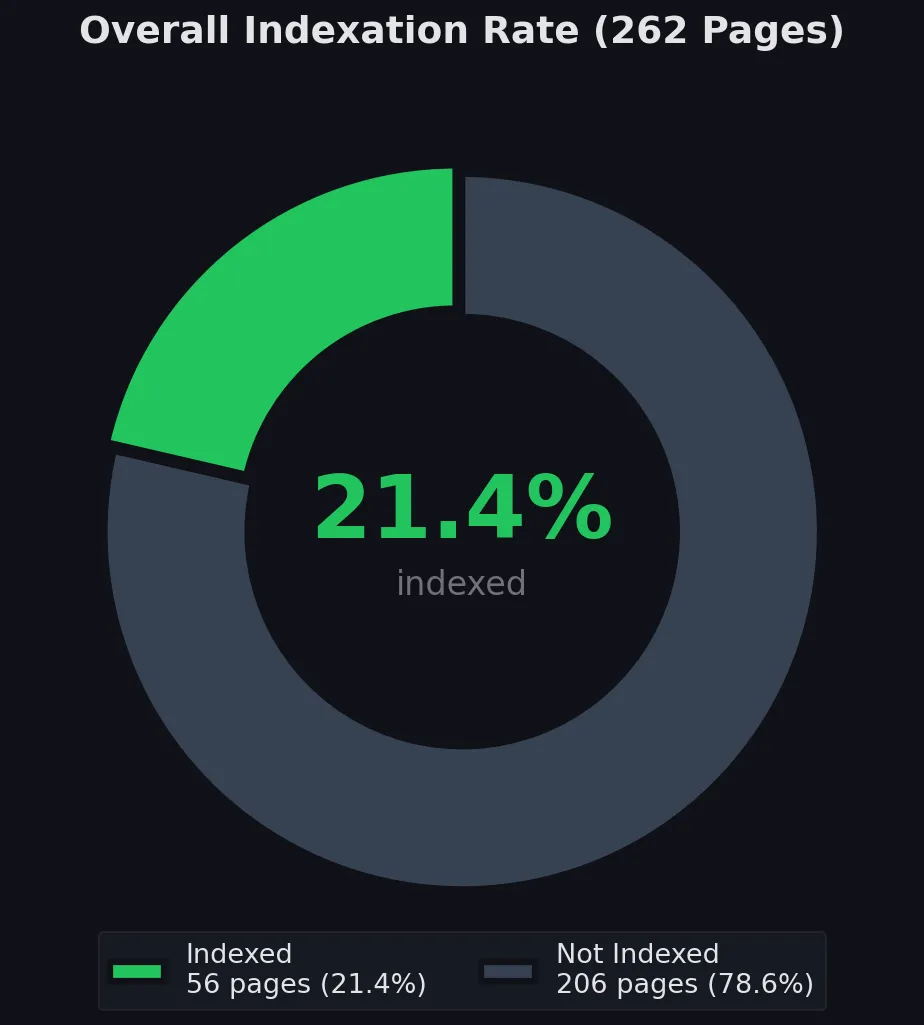

- Total pages built: 262

- Pages indexed: 56 out of 262 (21.4%)

- Total impressions: 6,220

- Total clicks: 7

- Average position: ~45

Seven clicks. From 6,220 impressions. That's a click-through rate so low it barely registers. But let's not get ahead of ourselves. The indexation breakdown is where the real story lives.

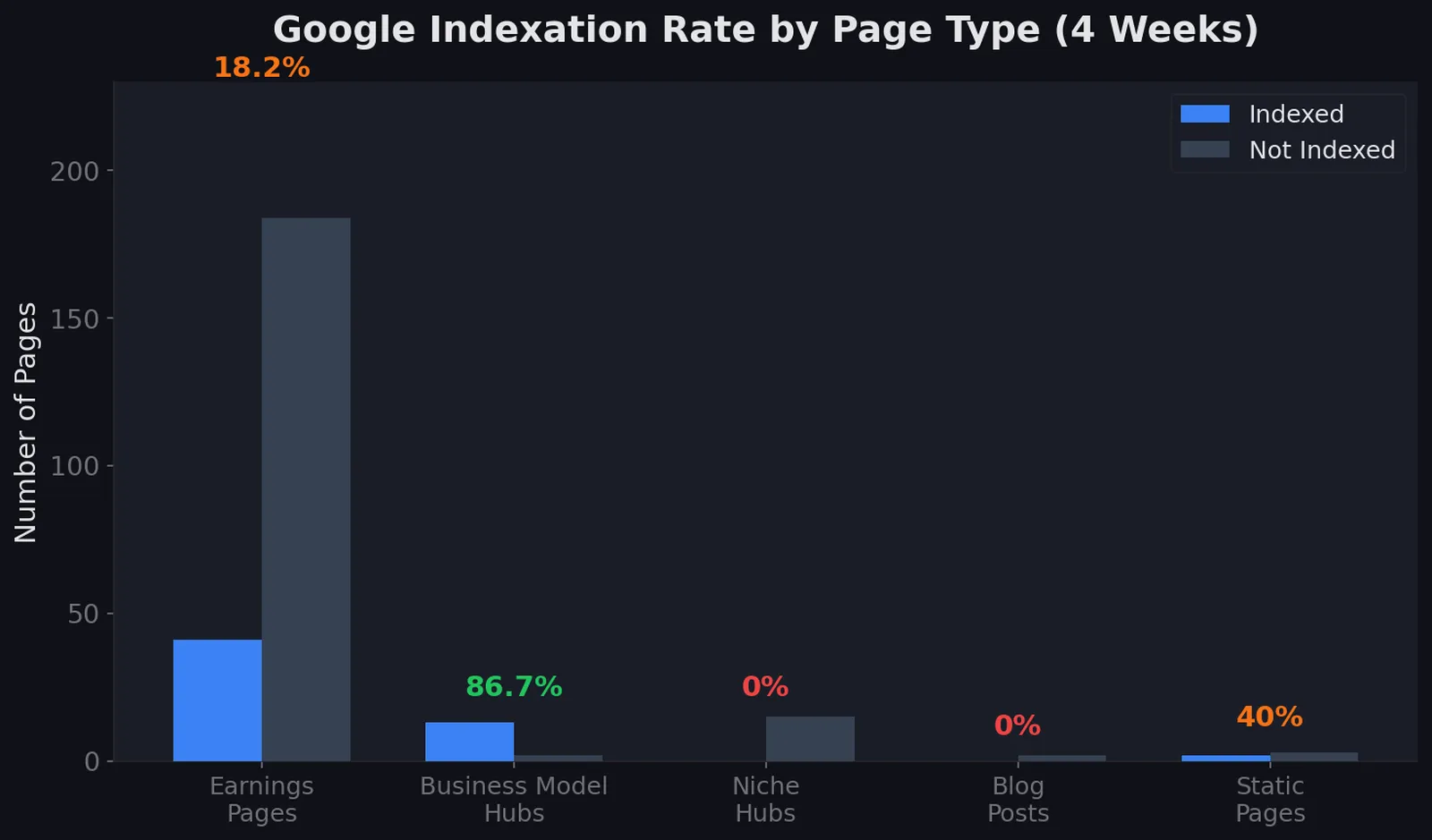

Indexation by Page Type

Page Type | Built | Indexed | Rate |

|---|---|---|---|

Earnings pages | 225 | 41 | 18.2% |

Business model hubs | 15 | 13 | 86.7% |

Niche hub pages | 15 | 0 | 0% |

Blog posts | 2 | 0 | 0% |

Static pages (home, about, contact) | 5 | 2 | 40% |

Total | 262 | 56 | 21.4% |

The pattern here is stark. Business model hub pages indexed at 86.7%. These are the pages like "/youtube" or "/etsy" that each aggregate all 15 niches under that business model. Google clearly saw these as useful category pages.

Meanwhile, the niche hub pages indexed at 0%. These are the inverse: pages like "/gaming" or "/food" that aggregate all 15 business models for that niche. Zero out of fifteen. Same site, same internal linking, completely different result.

The earnings pages (the core of the experiment) landed at 18.2%. Out of 225 pages, Google picked up 41 and left 184 sitting in the "Discovered - currently not indexed" or "Crawled - currently not indexed" buckets.

And both blog posts, including the original experiment writeup? Not indexed. Which is ironic given that I'm writing a follow-up to a post Google hasn't even acknowledged.

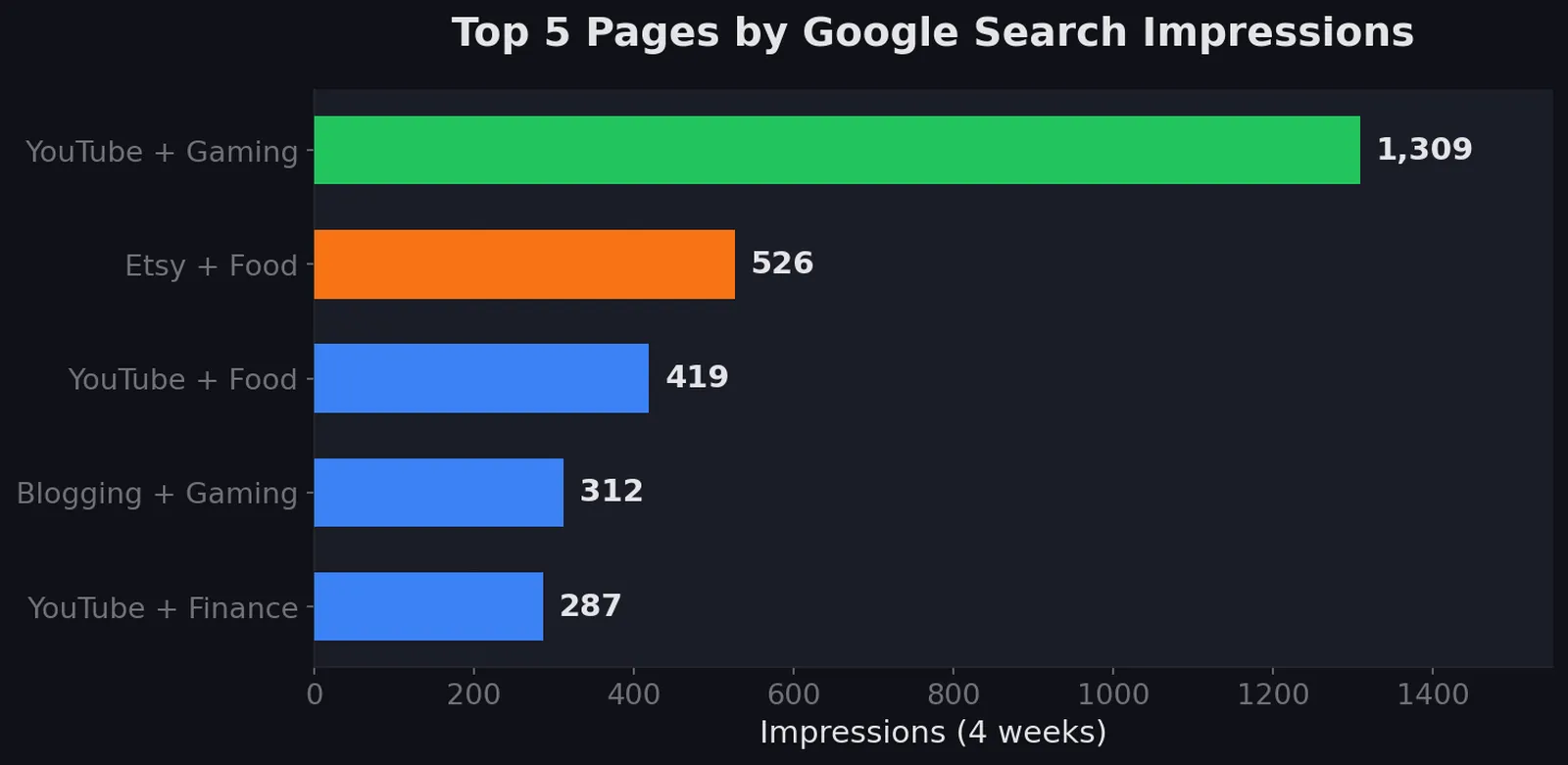

Top Pages by Impressions

Page | Impressions |

|---|---|

YouTube + Gaming | 1,309 |

Etsy + Food | 526 |

YouTube + Food | 419 |

Blogging + Gaming | 312 |

YouTube + Finance | 287 |

YouTube dominates the top of this list. Three out of five top performers are YouTube combinations. Gaming and Food are the standout niches. This makes sense. "How much do gaming YouTubers make" is the kind of query people actually search in high volume.

But here's the frustrating part: several of these pages are ranking in positions 5-10 and getting zero clicks. That's a title and snippet problem. When you're sitting on page one but nobody clicks, your SERP presentation is failing.

What Worked

YouTube combinations dominated. Any page pairing YouTube with a popular niche generated meaningful impressions. YouTube + Gaming alone pulled over 1,300 impressions, more than the bottom 150 pages combined. If I were optimizing purely for traffic, I'd double down on YouTube-adjacent content immediately.

Hub pages indexed fast and reliably. The business model hub pages hit 86.7% indexation. These pages have unique content by nature. Each one aggregates 15 different niche combinations, creating a page that doesn't look like any other page on the site. Google treated them as legitimate category pages.

Sitemap submission on day one paid off. I submitted the sitemap immediately after launch. Google started crawling within hours. The pages that were going to get indexed made it in within the first two weeks. After that, indexation essentially flatlined, which tells me Google made its quality decisions early and moved on.

Pages targeting less competitive queries got through. The earnings pages that did get indexed tended to target more specific, lower-competition combinations. This suggests Google was more willing to index pages where it didn't already have strong results from authoritative sources.

What Didn't Work

Identical template structure across 225 pages. This is the big one. Every single earnings page uses the same 8-section structure: overview, revenue breakdown, success factors, income ranges, getting started, FAQs, related pages, sources. When you're looking at 225 pages with the same skeleton, the pattern is obvious. And Google noticed.

Thin niche hub pages. The niche hubs were basically card grids linking to the 15 business models for that niche. No real content, no unique value, just navigation. Google indexed zero of them. Compare that to the business model hubs, which had more substantive content and indexed at 86.7%. The lesson is clear: hub pages need to earn their place with real content, not just link collections.

No backlinks. Four weeks in and the domain has zero backlinks. Not one. For a brand new domain with no history, this means Google has no external quality signals to work with. Every indexation decision is being made purely on crawl-level content analysis.

Blog posts with no internal linking strategy. Both blog posts sit orphaned in the content structure. They're linked from the blog index page but nowhere else. No earnings pages link to them, no hub pages reference them. In a site architecture sense, they're dead ends.

This connects back to what I identified in the original post as the "indexation stall": Google crawled the pages but decided not to index them. The lack of domain authority and external signals gave Google no reason to push past the initial quality threshold.

Why Google Rejected 82%

Let me be direct about what I think happened here.

The duplicate template structure is the primary cause. When Google's helpful content system evaluates a site and sees that 225 out of 262 pages follow the exact same section structure with the same heading patterns, it's going to flag that as templated, low-value content. It doesn't matter that each page has different data. The structural fingerprint is identical.

Think about it from Google's perspective. It crawls page 1: overview, revenue breakdown, success factors, income ranges, getting started, FAQs, related pages, sources. Then page 2: same thing. Page 3: same thing. By page 20, the pattern is undeniable.

The pages that did get indexed were likely the ones where the content happened to be more differentiated, either because the underlying data was richer, the queries were less competitive, or the specific niche/model combination produced more unique text. The 18% that made it through weren't random. They were the ones that passed a quality threshold despite the template.

There's also a domain authority factor at play. A brand new domain with zero backlinks is starting from the worst possible position. Google gives established domains the benefit of the doubt on borderline content. A new domain gets no such grace period. Every page has to individually justify its existence.

The niche hub pages at 0% indexation versus business model hubs at 86.7% is the clearest evidence of the content quality threshold. Both page types serve the same architectural purpose. Both have similar internal linking. The difference is content depth. The business model hubs had substantive introductory content. The niche hubs were thin card grids. Google made the distinction and acted on it.

Here's another telling detail: if you run a site:arnjen.com search in Google, the majority of results are omitted. Google shows the first page of results and then tells you the rest have been filtered out as "very similar." That's Google explicitly saying: "We know these pages exist. We crawled them. We chose to suppress them." It's not an indexation crawl problem. It's a quality and differentiation problem. Google is treating most of these pages as near-duplicates of each other, which honestly, from a template structure standpoint, they are.

What I'm Changing

Based on four weeks of data, here's the revised strategy:

1. Template differentiation. I'm building 5 different section structures, one for each business model category. Creator economy pages (YouTube, TikTok, Blogging) will have different sections than marketplace pages (Etsy, Amazon) or service-based pages (freelancing, consulting). The goal is to break the structural fingerprint that Google is detecting.

2. Enriching hub pages. Every hub page is getting a minimum of 800 words of real, substantive content. Not filler, but actual analysis of the business model or niche with original insights. The business model hubs proved that content-rich hub pages get indexed. The niche hubs proved that thin ones don't.

3. Cross-dimensional internal linking. Right now, the linking structure is mostly vertical: hub to earnings page. I'm adding horizontal links between related earnings pages across different dimensions. A YouTube + Gaming page should link to YouTube + Finance and to Twitch + Gaming. This creates a web of contextual relationships instead of isolated silos.

4. Building a linkable asset. I'm creating a free niche revenue calculator, a simple tool where someone can input their niche and business model to see estimated earnings ranges. Something worth linking to, which generates the backlinks this domain desperately needs.

5. Case studies for E-E-A-T signals. Posts like this one serve a dual purpose. They demonstrate real experience with the subject matter (Experience and Expertise in Google's E-E-A-T framework), and they give the site content that's genuinely unique. Nobody else has this specific data.

Lessons for Anyone Doing Programmatic SEO

If you're planning a pSEO project, here's what this data suggests:

Don't launch all pages at once with identical templates. This is the single biggest takeaway. If I could rerun this experiment, I'd launch with 5 different template structures from day one. Structural variety signals that each page was considered individually, even if they were generated programmatically.

Invest in hub page quality. Hub pages are your best shot at early indexation. They're naturally more unique than leaf pages because they aggregate different content. Make them substantial, 800+ words minimum with real analysis, not just card grids.

Build at least one linkable asset before expecting indexation. A brand new domain with zero backlinks is fighting uphill on every page. Having even a handful of quality backlinks to a tool, calculator, or original research piece changes the math on Google's quality threshold.

Start with 20-30 pages, get them indexed, then scale. Launching 225 pages at once on a new domain was aggressive. A phased approach (launch 20 pages, verify indexation, optimize, then launch the next batch) gives you feedback loops that a mass launch doesn't.

Domain authority matters more than content volume. I had 262 pages of content. Google indexed 56. A site with 30 pages and 10 quality backlinks would likely outperform this in total indexed pages and traffic. Volume without authority is just noise.

Watch your CTR, not just your rankings. Several pages ranked in positions 5-10 and got zero clicks. Ranking means nothing if the title and meta description don't compel a click. I need to revisit every title tag for the pages that are ranking but not earning clicks.

What's Next

The experiment continues. Here's the immediate roadmap:

Regenerating top performers first. The 41 indexed earnings pages are getting rebuilt with differentiated templates. These pages have already proven they can get through Google's filter, so giving them better content should improve their rankings and CTR.

Tracking indexation weekly. The target is 50% indexation within 90 days of implementing the changes. That means going from 56 indexed pages to roughly 130. It's aggressive but achievable if the template differentiation works.

Another follow-up post when there's data. I'm not going to write the next update until there's a meaningful delta to report. Probably 6-8 weeks from now, once the template changes have had time to be recrawled and reevaluated.

The honest summary of this experiment so far: programmatic SEO works, but only if Google believes your pages aren't programmatic. The irony isn't lost on me. The entire approach is about creating pages at scale, but the pages that succeed are the ones that don't look like they were created at scale.

Eighteen percent indexation isn't a failure. It's a baseline. The question now is whether the changes I'm making can push that number up, or whether this domain needs more fundamental authority signals before Google will let the rest through.

I'll report back with the data. In the meantime checkout https://degensourcer.com